PDF(868 KB)

PDF(868 KB)

PDF(868 KB)

PDF(868 KB)

PDF(868 KB)

PDF(868 KB)

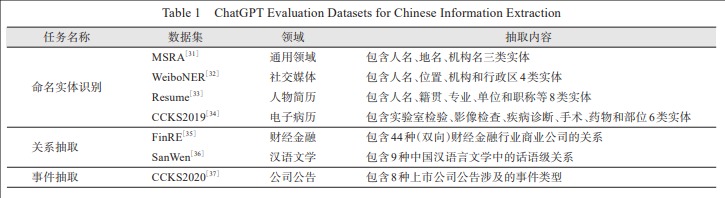

ChatGPT中文信息抽取能力测评——以三种典型的抽取任务为例

({{custom_author.role_cn}}), {{javascript:window.custom_author_cn_index++;}}

({{custom_author.role_cn}}), {{javascript:window.custom_author_cn_index++;}}Extracting Chinese Information with ChatGPT:An Empirical Study by Three Typical Tasks

({{custom_author.role_en}}), {{javascript:window.custom_author_en_index++;}}

({{custom_author.role_en}}), {{javascript:window.custom_author_en_index++;}}

| {{custom_ref.label}} |

{{custom_citation.content}}

{{custom_citation.annotation}}

|

/

| 〈 |

|

〉 |